Protecting the craft: How TrueTale scales the 'writer’s memory'

Storytelling is a human art form, and the Glasgow-based startup TrueTale is on a mission to ensure it stays that way. They’ve built an intelligent writing ecosystem that acts as a “permanent memory” for authors.

To scale this “memory” without data loss, TrueTale replaced traditional request-response patterns with a high-performance message broker: LavinMQ.

The challenge: Scaling the ‘Story Bible’

The idea for TrueTale didn’t come from a technical requirement, but from a personal struggle. The founder, Andrea Giulio Cerasoni, was deep into writing a manuscript for a fantasy book, a complex story of over 60,000 words, when he realized he was spending more time cross-referencing his own notes than actually writing. It’s in times of struggle that the best ideas take form, a writing assistant with better memory than you.

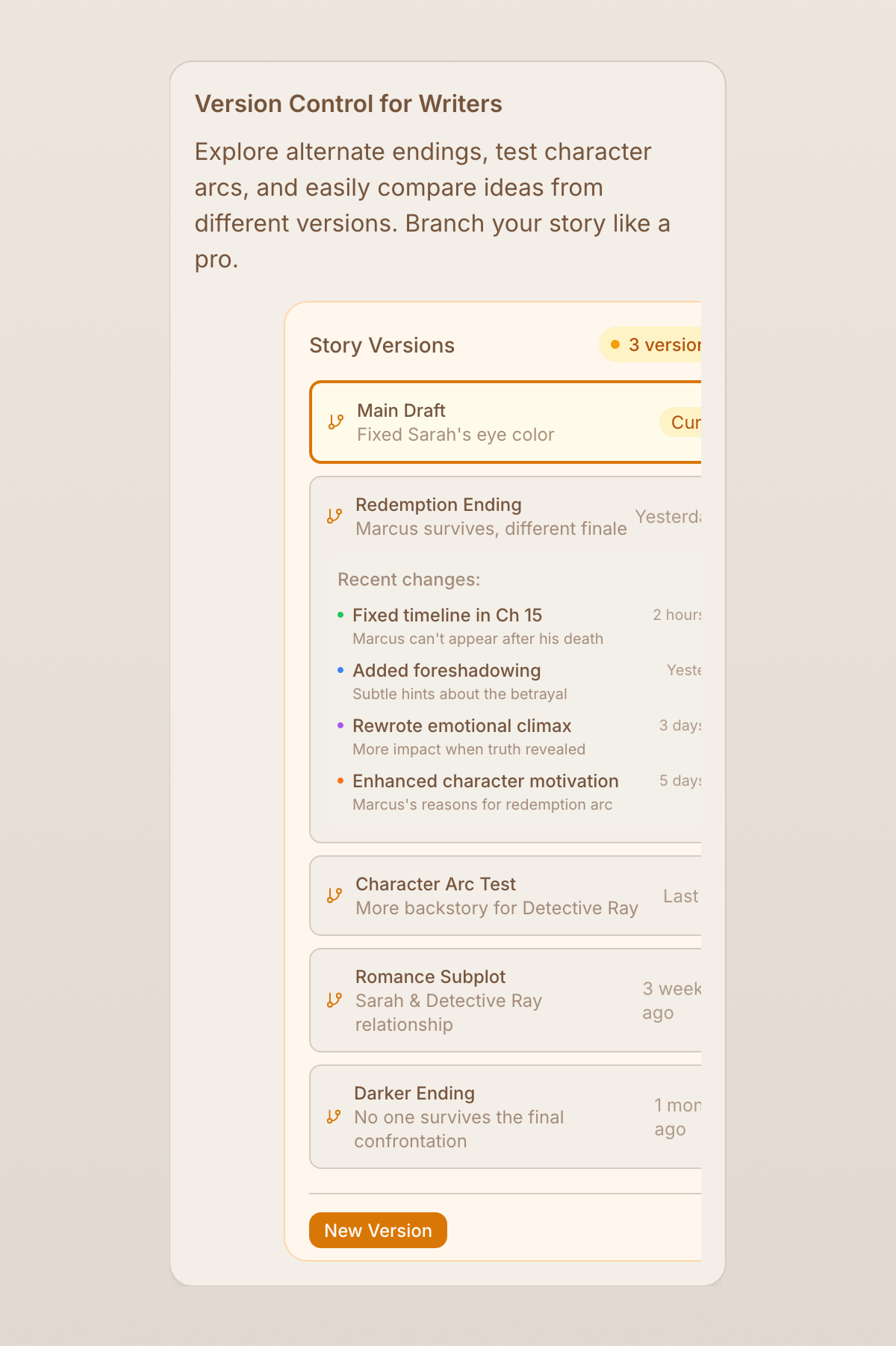

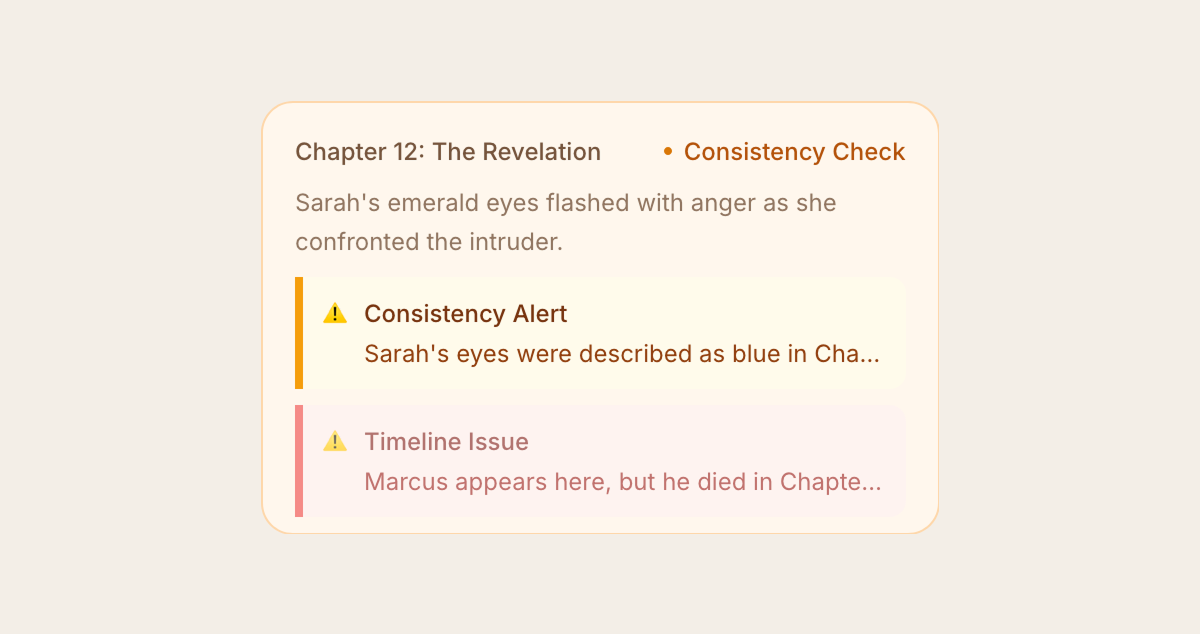

“TrueTale is non-generative; it doesn’t write the story for you,” Andrea explains. “Instead, it tracks every detail, like the emerald green of a character’s eyes or a secret whispered in Chapter 2, and maps the story so the author can focus on the craft without worrying about inconsistencies.”

The TrueTale dashboard provides authors with near real-time, organized updates on what’s added to the story. It visualizes characters, places, objects, and events. It displays how the information interacts. If the story suffers from timeline or consistency issues, the author will receive a notification and can easily backtrack story essentials by filtering the search results.

Updating this “Story Bible” in the background created a technical challenge. In Andreas’ case, every time he made a change to that 60,000-word draft, the system had to re-process the entire narrative context.

“I realized we couldn’t scale a system that relied on everything working perfectly every second.” If the server was busy for a moment, the data shouldn’t just vanish. We needed a way to manage the flow of information that respected the writer’s work.”

The limits of synchronous REST

Initially, TrueTale’s “Minimum Viable Product” (MVP) relied on direct REST calls between services, which created a tightly coupled system. It worked at first, but it soon struggled to scale.

“We quickly ran into issues,” explains Andrea. “Users were losing edits to their manuscripts, and analysis requests sometimes never reached the pipeline at all.”

The synchronous nature of REST created a fragile chain. If one service failed, everything broke. Projects would get stuck halfway through, forcing the team to manually reset user databases, a time-consuming and frustrating process. Even worse, the lack of coordination meant that analysis sometimes ran twice on the same edit, creating duplicates and confusion in the Story Bible, which was meant to provide clarity.

Finding a buffer: The shift to LavinMQ

To address this, TrueTale introduced LavinMQ as an intelligent buffer between its services. By shifting to a message queue, they decoupled the act of writing from the heavy lifting of narrative analysis.

Now, when a writer makes a change, that edit is safely queued. By implementing consistent hashing, TrueTale can process thousands of edits across multiple users in parallel while maintaining strict sequential guarantees for every single manuscript.

If a background service is busy, the edit simply waits in the queue until the system is ready. If an update fails repeatedly, it is moved to a Dead Letter Queue (DLQ). It’s used as a safety net: instead of the data simply disappearing, it is set aside for review. This gives the team full visibility into exactly which edits failed and why, enabling them to provide faster support and ensure no word is ever lost.

From REST to producer-consumer

The migration from REST to a message-driven flow was quite straightforward.

“The implementation was extremely easy,” says Andrea. “The Spring Boot AMQP library we used is 100% compatible with LavinMQ, so the integration felt native from the start.” By leveraging Cursor/LLMs, they transitioned their REST calls to a producer-consumer architecture in just a few days.

Efficiency: Cutting infrastructure costs by 66%

By letting events sit in a queue until computing power is available, the team no longer needs to maintain oversized, expensive server instances just to handle spikes in activity. This allowed them to reduce their microservice instance sizes by two-thirds, cutting costs from $75 to $25 per month.

The impact on the bottom line was immediate. Beyond reducing server costs, TrueTale solved the “AI Leak.” Previously, roughly 15% of their LLM token costs were wasted due to network failures. “By ensuring every edit is delivered correctly the first time, we’ve eliminated the cost of re-analyzing manuscripts due to broken REST calls,” Andrea said.

For Andrea, this wasn’t just about the budget: “We’re glad this is the more ethical choice, we avoid wasting resources on big instances just to tackle occasional spikes.”

Moving Forward

With the “stuck” analyses removed from the early MVP, the system now runs even during high-traffic periods. By moving to a producer-consumer setup with LavinMQ, the team was able to delete hundreds of lines of complex “retry” logic and circuit breakers that were previously required to keep the REST services alive.

The app is now leaner, saving the team about five hours every week on DevOps and support tasks. The infrastructure has become invisible, allowing authors to focus entirely on their story, confident that every detail, no matter how small, will be remembered correctly by the Story Bible.

“Since moving the core of our narrative engine to LavinMQ, we’ve had zero data loss and zero downtime. It just works.” — Andrea Cerasoni, Founder.

Lovisa Johansson