Introduction

Configuration

Language Support

AMQP 0-9-1 Overview

More Exchange Types

More Consumer Features

Queue Deep-dive

Other Features

Reliable Message Delivery

High Availability and Backup

Monitoring

Management HTTP API

Tutorials

Networking

LavinMQ CLI

Standby Replication

It is good practice to set up one’s messaging system in a way that it can recover from potential failures like data center outages, system failure or data corruption.

LavinMQ’s standby replication feature was built to cater for those needs - it can help you recover from data center outages, system failures and in some scenarios, even data corruption.

What is standby replication in LavinMQ?

Standby replication enables the establishment of a leader LavinMQ node and one or more follower nodes. Any changes made on the leader node, encompassing definitions (queues, exchanges, vhosts, users, etc.) as well as messages (published and consumed), are replicated to the follower nodes in real-time.

When can I use the standby replication feature in LavinMQ?

This feature finds practical application in scenarios where you’d like to have an up to date backup of a node’s data.

In the unfortunate event of data corruption on a LavinMQ node, failure of the node or data center outage, standby replication allows for recovery with the backup server(s). When the leader is unavailable, an administrator can manually make one of the followers the leader and point other followers to it.

Benefits of the standby replication feature in LavinMQ

By incorporating standby replication, LavinMQ delivers several key benefits:

-

Data Redundancy: Replicating changes and messages to follower servers ensures data redundancy, protecting against permanent data loss.

-

Fault Tolerance: The ability to manually promote a follower to leader offers a resilient system that can recover from failures, maybe not very quickly, but still, this eliminates the risk of permanent data loss and minimizes disruptions.

How to setup standby replication in LavinMQ

In this section, we will set up replication between two LavinMQ nodes: the leader and the follower. Even though we’d be working with just one follower here, you can extend this to as many followers as you want.

Prerequisites

- As we will be testing this feature locally, to follow along, you need to have LavinMQ installed on your machine.

Take the following steps to set up replication between two LavinMQ nodes:

Step 1: Enable replication listener on a leader node

- Start the leader node with:

lavinmq --data-dir ~/lavin-replication-testing/leader --replication-bind 0.0.0.0 --replication-port 5679

-

Feel free to change the path passed to

--data-dirto a different location where you’d like to have the data directory for the node created. -

If everything goes well, the leader node should indicate in the logs that the replication listener has been enabled:

2023-07-05T09:32:25.569257Z INFO amqpserver Listening on 127.0.0.1:5672

2023-07-05T09:32:25.569284Z INFO replication Listening on 0.0.0.0:5679

- Alternatively, you can enable the replication listener on

the leader node from its

lavinmq.inifile like so:

[replication]

bind = 0.0.0.0

port = 5679

Step 2: Configure the follower node to connect to the leader

- Start the follower node with:

lavinmq --data-dir ~/lavin-replication-testing/follower --follow tcp://localhost:5679

-

Again, feel free to change the path passed to

--data-dirto a different location where you’d like to have the data directory for the node created. -

When you run the follower, LavinMQ expects to find a file named

.replication_secretin the follower’s data directory. This file contains the mutual password needed by all the nodes in the replication arrangement - currently, it does not exist in the follower’s data directory so the command above will most likely fail. -

Thus, copy the

.replication_secretfile in the leader’s data directory to the follower’s data directory and restart the follower. -

If everything goes well, the follower node should indicate in the logs that all files have been synced and that the follower is streaming changes from the leader:

2023-07-05T10:57:00.083089Z INFO - replication: Files synced

2023-07-05T10:57:00.083105Z INFO - replication: Streaming changes

- Also note that you can configure the follower node to connnect to the leader

from the follower’s

lavinmq.inifile as shown below:

[replication]

follow = tcp://localhost:5679

Step 3: Verifying that the replication worked

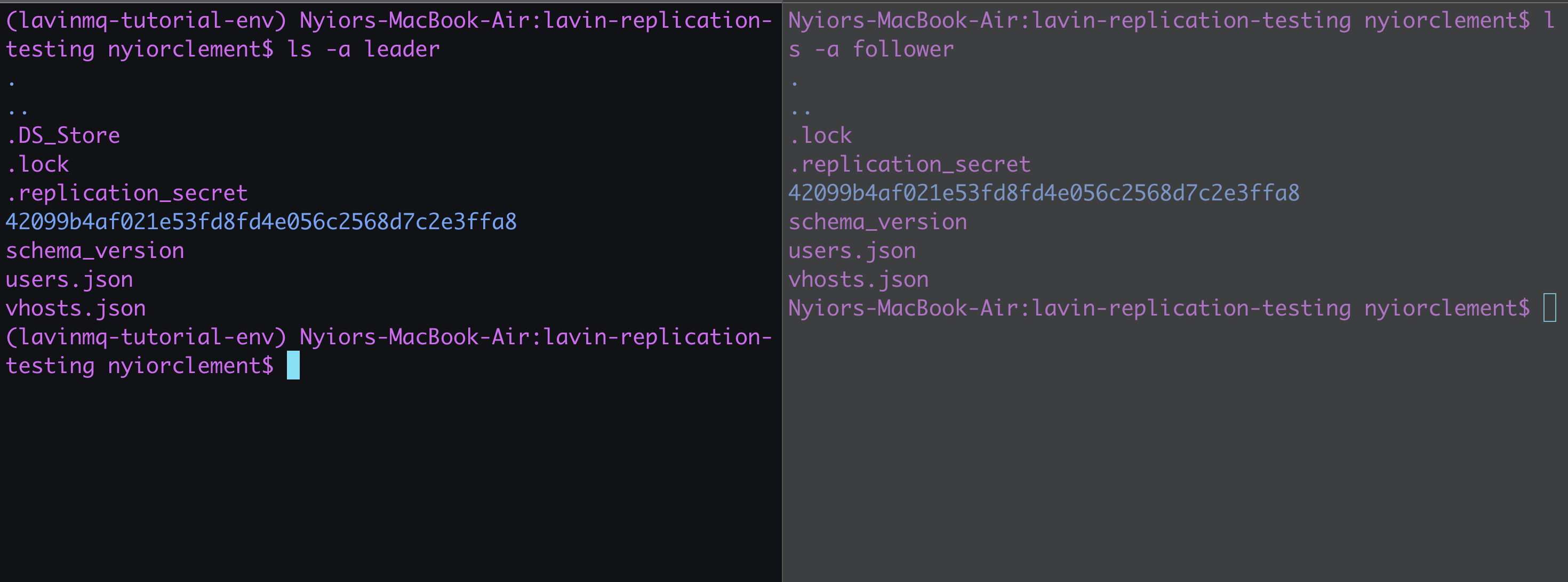

If the replication has worked, the follower should now have all the data from the leader’s data directory. You can verify this by comparing the leader and the follower’s data directory. See image below:

You can even test further by creating queues and publishing messages to them from the management interface of the leader node. This new data should be replicated on the leader node.

How the standby replication works

In a nutshell, the replication of data from the leader to the follower happens in the manner described below. keep in mind that we are abstracting some of the low level details here:

- When a follower node connects to the leader node:

- The follower authenticates itself and if the authentication fails, the server terminates the connection. The mutual passowrd comes into play here.

-

If the authentication passes, the leader securely sends a list of files in its data directory to the follower. The follower could also request files from the leader where necessary.

-

Once the follower has obtained all the required files, it notifies the leader, and the server starts streaming the changes.

-

Streaming changes here entails informing the follower about updates made to the leader. These updates could be anything from newly published messages or consumed messages, to files that should be deleted or rewritten.

- The follower then notifies the leader of successful replication once done effecting the new changes.

Leader election

The current implementation of the replication feature does not support an automatic fail-over mechanism where a follower becomes the new leader if the original leader goes offline (for whatever reason).

When the leader is unavailable, a follower could be made the leader in one of two ways:

- Manual leader election: An administrator can manually restart one of the followers

as the leader with the

--replication-bind 0.0.0.0 --replication-port 5679argument. The admnistrator could then point the other followers to this new leader. - Automated leader election: Here, you can set up an external system that monitors your replication set up and promotes a follower when the leader fails.

Wrap up

We’ve seen how the replication feature allows us use LavinMQ in a more resillient manner. But beyond the replication feature, there is more to LavinMQ.

Ready to take the next steps? Here are some things you should keep in mind:

Managed LavinMQ instance on CloudAMQP

LavinMQ has been built with performance and ease of use in mind - we've benchmarked a throughput of about 1,000,000 messages/sec. You can try LavinMQ without any installation hassle by creating a free instance on CloudAMQP. Signing up is a breeze.

Help and feedback

We welcome your feedback and are eager to address any questions you may have about this piece or using LavinMQ. Join our Slack channel to connect with us directly. You can also find LavinMQ on GitHub.